MIL

Fighting racism and hate speech with community solutions

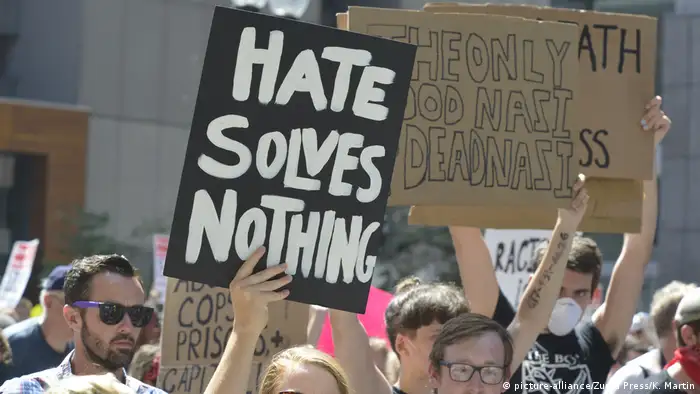

Automation and AI are increasingly being used to spread racism and hate speech on social media. Community-based solutions should have a role in fighting online propaganda and disinformation, says Renata Avila.

Social media companies have come under scrutiny for their role in amplifying hate speech online and their failure to adopt policies that tie their hate speech rules directly to international human rights law. In fact, there is no consensus across social media platforms and jurisdictions on what they consider to be hate speech and how their automated systems should try to enforce their rules to prevent some forms of speech on their platforms.

Community involvement needed

As they increasingly use automation or artificial intelligence tools to remove hate speech, there is little involvement of diverse, international communities in designing the responses. Solutions to fix the platforms are still remote and non-democratic, a field of experimentation reserved to engineers. Their effectiveness is still broadly questioned and needs to be tested further.

Our traditional digital tactics to counter hate speech are obsolete or weak when we face a sophisticated, opaque automated machine as the hate amplifier and campaigns targeting racial groups. Today, communities need to educate social network systems on top of educating people, especially when racial minorities are targeted with sophisticated campaigns designed to spread fear and hate.

And as we, the users, the citizens, wait for alternative tools that promote individual autonomy, security and free expression instead of the monetization of fear and hate, creative ways to tackle the problem have emerged from the most affected communities. Those tactics involve de-amplification, de-monetization, education, counter-speech, reporting and training.

Resisting racist fear campaigns

A racist, hostile online environment where citizens can be targeted because of their ethnicity can be a minefield and can erode civic duties and civil and political rights, limiting people’s ability to exercise rights such as voting. Hate speech can be an effective tool to suppress the votes of a targeted population. Reversing a campaign of hate and fear targeting Black voters in particular during the 2020 elections in the US is the mission of Mutale Nkonde and her team “AI for the People”. In partnership with Moveon.org, they launched an experiment to test whether a fact-based campaign engages Black voters more effectively than a current disinformation campaign targeting them now.

The battlefield — the social networks — will be used strategically to reverse online propaganda and attempt to drown out COVID-19 disinformation on Black Twitter, an online subculture on the social media platform. AI for the People has been tracking racially targeted disinformation from automated social media accounts spreading messages intending to disuade Black Americans from voting, such as the one below.

After the election, the campaign will evaluate whether organized racial communities collaborating in a coordinated, crowdsourced, massive effort to counter the disinformation targeting them can reverse a trend, effectively reducing online engagement with COVID-19 disinformation and replacing it with social media messages containing videos that encourage Black voters to come out to #VoteDownCOVID.

Will the truth about the coronavirus win the online engagement race? It is yet to be determined. But at least the campaign, which is carefully measuring online engagement with #VoteDownCovid against the performance of disinformation messages, will shed a light on innovative interventions that we can design to train the algorithm to amplify human rights.Then we can address the systems and the bigger issues of gender, racial and economic inequalities coded into our societies.

The International Human Rights framework should be the guiding design principle to any and all online platforms. They should be designed to enhance freedom of expression, anti-discrimination, equality, and equal and effective public participation. Examples like the #VoteDownCOVID provide us with the evidence of harm, but also the possibilities of designing something better, with the help of many.

Renata Avila is a Race and Technology Fellow at the Stanford Institute for Human-Centered Artificial Intelligence / Center for Comparative Studies in Race and Ethnicity (CCSRE).

- Date 06.11.2020

- Author Renata Avila

- Feedback: Send us your feedback.

- Print Print this page

- Permalink https://p.dw.com/p/3kxpS

- Date 06.11.2020

- Author Renata Avila

- Send us your feedback.

- Print Print this page

- Permalink https://p.dw.com/p/3kxpS