guest post

Internet addiction isn't the problem, it's the symptom

If we want technology that values well-being over engagement, we need to rebuild trust between users and tech companies. By Cathleen Berger

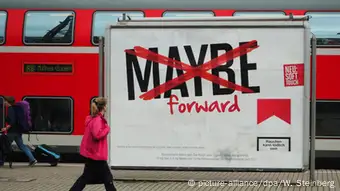

Ever caught yourself shuddering at the supermarket checkout counter when you see the graphic pictures on cigarette packaging, warning all would-be smokers of the risks?

These days, I wonder what the equivalent images on the back of an iPhone would be: A family at the dinner table, each member hunched over their device? A surveillance camera in a bedroom? An unshowered, half-starved teenager immersed in their VR glasses?

It's hardly news that every company, app, and platform wants our attention. There are news articles each day about how smartphones and social media commandeer our attention and our time, at the cost of our well-being. And there's a growing movement committed to making technology less addictive: Advocacy groups, research, concerned parents.

Addressing addictive patterns in technology is a noble undertaking. But it's also short-sighted and insufficient. Addictive technology is a symptom, not the root problem. The underlying cause is the tech sector's dominant ad-driven business model that relies on surveillance.

Attention economy

It's no accident that Facebook, Twitter, and other applications are so hard to disconnect from — they're built like that. In today’s attention economy, competition is no longer just about building the best, most appealing, distinct, or convenient product; competition is about who has access to the most accurate data. This attention economy is also an addiction economy, as advertisers and data brokers actively exploit human weaknesses and our natural vulnerability to addiction, gamifying our experiences and manipulating our subconsciousness to gain an edge on us (and our money).

This mercenary technology seems to be everywhere, and it's only growing more prevalent — and harder to exert control over. Social media feeds, messaging apps, and shopping recommendations are just one part of the story. There are cameras, trackers, and sensors everywhere, private and public, and it’s often not clear how to opt out. Decisions about your medical treatment, your job, your chances of getting a mortgage, and more are increasingly automated and developed in a closed system with opaque data sources. How do you challenge that power? Worse still, how do you even know who's behind it — a business or a government?

If you can't even begin to ask questions, to scrutinize, to opt out, there's no choice, no agency, and, consequently, no trust.

The undermining of our trust in tech has repercussions beyond addiction. Amoral algorithms and revenue-hungry companies can undermine the stability of markets, of political systems, and entire networked societies. It is little surprise that we’re now living through a techlash: People feeling like neither governments nor companies are able — or willing — to protect our rights and freedoms. To create more responsible, ethical technology, we need to talk about trust between companies and users.

So how do we rebuild trust?

One part of the solution is growing the movement for more responsible technology through projects like Time Well Spent, the Internet Commission, and Chupadados. We should also encourage the growing number of manifestos and commitments to build and design technologies that are conscious of their potential for harm and exploitation. These undertakings are illustrative of a much-needed push toward more control on the side of the user, even at the expense of click-driven revenue.

Another part of the solution: More common-sense regulations. It took years to understand the addictive power of tobacco and bring it to light, but once apparent, regulators worldwide started to narrow the space for advertisements, first by punishing misleading (or false) claims, then banning such ads from TV and radio, to then requiring warning messages and pictures to be displayed clearly on the product itself. There are already bright spots, like the GDPR in Europe, or the proposed Honest Ads Act in the U.S.

A third part of the solution would require tech companies to create better products. Safer and more transparent digital tools would certainly contribute to regain consumers’ trust.

Trust has to be earned, not once but over and over again. This is true between people and it's true when it comes to technological change. Businesses benefiting from the digital economy need to consider its effects on society at large. Governments need to invest in solving social and political problems, rather than expecting tech to solve these issues for them. Because in order to trust the technologies that surround us, people need to know how they affect them and be able to choose an alternative. That might sound simple, but choosing an alternative is hard if the mechanisms behind the tech are utterly obscure. There is more than one vision of the future and it is up to us to start imagining the ones we actually want.

Cathleen Berger is currently heading up Mozilla‘s work on Global Governance and Policy Strategy. She tweets at @_cberger_.

(am/lw)

DW recommends

WWW links

- Date 11.04.2019

- Feedback: Send us your feedback.

- Print Print this page

- Permalink https://p.dw.com/p/3GZVE

- Date 11.04.2019

- Send us your feedback.

- Print Print this page

- Permalink https://p.dw.com/p/3GZVE